Eliezer Yudkowsky

American AI researcher and writer (born 1979) / From Wikipedia, the free encyclopedia

Dear Wikiwand AI, let's keep it short by simply answering these key questions:

Can you list the top facts and stats about Eliezer Yudkowsky?

Summarize this article for a 10 year old

SHOW ALL QUESTIONS

Eliezer S. Yudkowsky (/ˌɛliˈɛzər ˌjʌdˈkaʊski/ EH-lee-EH-zər YUD-KOW-skee;[1] born September 11, 1979) is an American artificial intelligence researcher[2][3][4][5] and writer on decision theory and ethics, best known for popularizing ideas related to friendly artificial intelligence.[6][7] He is the founder of and a research fellow at the Machine Intelligence Research Institute (MIRI), a private research nonprofit based in Berkeley, California.[8] His work on the prospect of a runaway intelligence explosion influenced philosopher Nick Bostrom's 2014 book Superintelligence: Paths, Dangers, Strategies.[9]

Quick Facts Born, Organization ...

Eliezer Yudkowsky | |

|---|---|

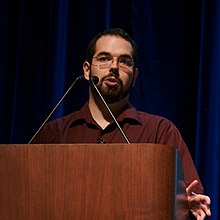

Yudkowsky at Stanford University in 2006 | |

| Born | Eliezer Shlomo[lower-alpha 1] Yudkowsky (1979-09-11) September 11, 1979 (age 44) |

| Organization | Machine Intelligence Research Institute |

| Known for | Coining the term friendly artificial intelligence Research on AI safety Rationality writing Founder of LessWrong |

| Website | www |

Close