Top Qs

Timeline

Chat

Perspective

Digital image processing

Algorithmic processing of digitally-represented images From Wikipedia, the free encyclopedia

Remove ads

Digital image processing is the use of a digital computer to process digital images through an algorithm.[1][2] As a subcategory or field of digital signal processing, digital image processing has many advantages over analog image processing. It allows a much wider range of algorithms to be applied to the input data and can avoid problems such as the build-up of noise and distortion during processing. Since images are defined over two dimensions (perhaps more), digital image processing may be modeled in the form of multidimensional systems. The generation and development of digital image processing are mainly affected by three factors: first, the development of computers;[3] second, the development of mathematics (especially the creation and improvement of discrete mathematics theory);[4] and third, the demand for a wide range of applications in environment, agriculture, military, industry and medical science has increased.[5]

Remove ads

History

Summarize

Perspective

Many of the techniques of digital image processing, or digital picture processing as it often was called, were developed in the 1960s, at Bell Laboratories, the Jet Propulsion Laboratory, Massachusetts Institute of Technology, University of Maryland, and a few other research facilities, with application to satellite imagery, wire-photo standards conversion, medical imaging, videophone, character recognition, and photograph enhancement.[6] The purpose of early image processing was to improve the quality of the image. It was aimed for human beings to improve the visual effect of people. In image processing, the input is a low-quality image, and the output is an image with improved quality. Common image processing include image enhancement, restoration, encoding, and compression. The first successful application was the American Jet Propulsion Laboratory (JPL). They used image processing techniques such as geometric correction, gradation transformation, noise removal, etc. on the thousands of lunar photos sent back by the Space Detector Ranger 7 in 1964, taking into account the position of the Sun and the environment of the Moon. The impact of the successful mapping of the Moon's surface map by the computer has been a success. Later, more complex image processing was performed on the nearly 100,000 photos sent back by the spacecraft, so that the topographic map, color map and panoramic mosaic of the Moon were obtained, which achieved extraordinary results and laid a solid foundation for human landing on the Moon.[7]

The cost of processing was fairly high, however, with the computing equipment of that era. That changed in the 1970s, when digital image processing proliferated as cheaper computers and dedicated hardware became available. This led to images being processed in real-time, for some dedicated problems such as television standards conversion. As general-purpose computers became faster, they started to take over the role of dedicated hardware for all but the most specialized and computer-intensive operations. With the fast computers and signal processors available in the 2000s, digital image processing has become the most common form of image processing, and is generally used because it is not only the most versatile method, but also the cheapest.

Image sensors

The basis for modern image sensors is metal–oxide–semiconductor (MOS) technology,[8] invented at Bell Labs between 1955 and 1960,[9][10][11][12][13][14] This led to the development of digital semiconductor image sensors, including the charge-coupled device (CCD) and later the CMOS sensor.[8]

The charge-coupled device was invented by Willard S. Boyle and George E. Smith at Bell Labs in 1969.[15] While researching MOS technology, they realized that an electric charge was the analogy of the magnetic bubble and that it could be stored on a tiny MOS capacitor. As it was fairly straightforward to fabricate a series of MOS capacitors in a row, they connected a suitable voltage to them so that the charge could be stepped along from one to the next.[8] The CCD is a semiconductor circuit that was later used in the first digital video cameras for television broadcasting.[16]

The NMOS active-pixel sensor (APS) was invented by Olympus in Japan during the mid-1980s. This was enabled by advances in MOS semiconductor device fabrication, with MOSFET scaling reaching smaller micron and then sub-micron levels.[17][18] The NMOS APS was fabricated by Tsutomu Nakamura's team at Olympus in 1985.[19] The CMOS active-pixel sensor (CMOS sensor) was later developed by Eric Fossum's team at the NASA Jet Propulsion Laboratory in 1993.[20] By 2007, sales of CMOS sensors had surpassed CCD sensors.[21]

MOS image sensors are widely used in optical mouse technology. The first optical mouse, invented by Richard F. Lyon at Xerox in 1980, used a 5 μm NMOS integrated circuit sensor chip.[22][23] Since the first commercial optical mouse, the IntelliMouse introduced in 1999, most optical mouse devices use CMOS sensors.[24][25]

Image compression

An important development in digital image compression technology was the discrete cosine transform (DCT), a lossy compression technique first proposed by Nasir Ahmed in 1972.[26] DCT compression became the basis for JPEG, which was introduced by the Joint Photographic Experts Group in 1992.[27] JPEG compresses images down to much smaller file sizes, and has become the most widely used image file format on the Internet.[28] Its highly efficient DCT compression algorithm was largely responsible for the wide proliferation of digital images and digital photos,[29] with several billion JPEG images produced every day as of 2015[update].[30]

Medical imaging techniques produce very large amounts of data, especially from CT, MRI and PET modalities. As a result, storage and communications of electronic image data are prohibitive without the use of compression.[31][32] JPEG 2000 image compression is used by the DICOM standard for storage and transmission of medical images. The cost and feasibility of accessing large image data sets over low or various bandwidths are further addressed by use of another DICOM standard, called JPIP, to enable efficient streaming of the JPEG 2000 compressed image data.[33]

Digital signal processor (DSP)

Electronic signal processing was revolutionized by the wide adoption of MOS technology in the 1970s.[34] MOS integrated circuit technology was the basis for the first single-chip microprocessors and microcontrollers in the early 1970s,[35] and then the first single-chip digital signal processor (DSP) chips in the late 1970s.[36][37] DSP chips have since been widely used in digital image processing.[36]

The discrete cosine transform (DCT) image compression algorithm has been widely implemented in DSP chips, with many companies developing DSP chips based on DCT technology. DCTs are widely used for encoding, decoding, video coding, audio coding, multiplexing, control signals, signaling, analog-to-digital conversion, formatting luminance and color differences, and color formats such as YUV444 and YUV411. DCTs are also used for encoding operations such as motion estimation, motion compensation, inter-frame prediction, quantization, perceptual weighting, entropy encoding, variable encoding, and motion vectors, and decoding operations such as the inverse operation between different color formats (YIQ, YUV and RGB) for display purposes. DCTs are also commonly used for high-definition television (HDTV) encoder/decoder chips.[38]

Remove ads

Tasks

Digital image processing allows the use of much more complex algorithms, and hence, can offer both more sophisticated performance at simple tasks, and the implementation of methods which would be impossible by analogue means.

In particular, digital image processing is a concrete application of, and a practical technology based on:

Some techniques which are used in digital image processing include:

Remove ads

Digital image transformations

Summarize

Perspective

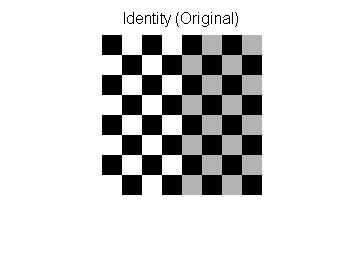

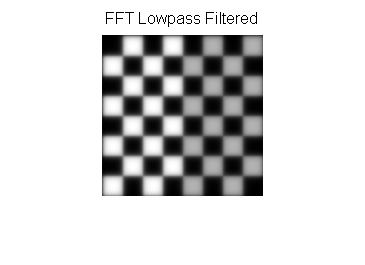

Filtering

Digital filters are used to blur and sharpen digital images. Filtering can be performed by:

- convolution with specifically designed kernels (filter array) in the spatial domain[39]

- masking specific frequency regions in the frequency (Fourier) domain

The following examples show both methods:[40]

Image padding in Fourier domain filtering

Images are typically padded before being transformed to the Fourier space, the highpass filtered images below illustrate the consequences of different padding techniques:

Notice that the highpass filter shows extra edges when zero padded compared to the repeated edge padding.

Filtering code examples

MATLAB example for spatial domain highpass filtering.

img=checkerboard(20); % generate checkerboard

% ************************** SPATIAL DOMAIN ***************************

klaplace=[0 -1 0; -1 5 -1; 0 -1 0]; % Laplacian filter kernel

X=conv2(img,klaplace); % convolve test img with

% 3x3 Laplacian kernel

figure()

imshow(X,[]) % show Laplacian filtered

title('Laplacian Edge Detection')

Affine transformations

Affine transformations enable basic image transformations including scale, rotate, translate, mirror and shear as is shown in the following examples:[40]

To apply the affine matrix to an image, the image is converted to matrix in which each entry corresponds to the pixel intensity at that location. Then each pixel's location can be represented as a vector indicating the coordinates of that pixel in the image, [x, y], where x and y are the row and column of a pixel in the image matrix. This allows the coordinate to be multiplied by an affine-transformation matrix, which gives the position that the pixel value will be copied to in the output image.

However, to allow transformations that require translation transformations, 3-dimensional homogeneous coordinates are needed. The third dimension is usually set to a non-zero constant, usually 1, so that the new coordinate is [x, y, 1]. This allows the coordinate vector to be multiplied by a 3×3 matrix, enabling translation shifts. Thus, the third dimension, i.e. the constant 1, allows translation.

Because matrix multiplication is associative, multiple affine transformations can be combined into a single affine transformation by multiplying the matrix of each individual transformation in the order that the transformations are done. This results in a single matrix that, when applied to a point vector, gives the same result as all the individual transformations performed on the vector [x, y, 1] in sequence. Thus a sequence of affine transformation matrices can be reduced to a single affine transformation matrix.

For example, 2-dimensional coordinates only permit rotation about the origin (0, 0). But 3-dimensional homogeneous coordinates can be used to first translate any point to (0, 0), then perform the rotation, and lastly translate the origin (0, 0) back to the original point (the opposite of the first translation). These three affine transformations can be combined into a single matrix—thus allowing rotation around any point in the image.[41]

Image denoising with mathematical morphology

Mathematical morphology (MM) is a nonlinear image processing framework that analyzes shapes within images by probing local pixel neighborhoods using a small, predefined function called a structuring element. In the context of grayscale images, MM is especially useful for denoising through dilation and erosion—primitive operators that can be combined to build more complex filters.

Suppose we have:

- A discrete grayscale image:

- A structuring element:

Here, defines the neighborhood of relative coordinates over which local operations are computed. The values of bias the image during dilation and erosion.

- Dilation

- Grayscale dilation is defined as:

- For example, the dilation at position (1, 1) is calculated as:

- Erosion

- Grayscale erosion is defined as:

- For example, the erosion at position (1, 1) is calculated as:

Results

After applying dilation to :

After applying erosion to :

Opening and Closing

MM operations, such as opening and closing, are composite processes that utilize both dilation and erosion to modify the structure of an image. These operations are particularly useful for tasks such as noise removal, shape smoothing, and object separation.

- Opening: This operation is performed by applying erosion to an image first, followed by dilation. The purpose of opening is to remove small objects or noise from the foreground while preserving the overall structure of larger objects. It is especially effective in situations where noise appears as isolated bright pixels or small, disconnected features.

For example, applying opening to an image with a structuring element would first reduce small details (through erosion) and then restore the main shapes (through dilation). This ensures that unwanted noise is removed without significantly altering the size or shape of larger objects.

- Closing: This operation is performed by applying dilation first, followed by erosion. Closing is typically used to fill small holes or gaps within objects and to connect broken parts of the foreground. It works by initially expanding the boundaries of objects (through dilation) and then refining the boundaries (through erosion).

For instance, applying closing to the same image would fill in small gaps within objects, such as connecting breaks in thin lines or closing small holes, while ensuring that the surrounding areas are not significantly affected.

Both opening and closing can be visualized as ways of refining the structure of an image: opening simplifies and removes small, unnecessary details, while closing consolidates and connects objects to form more cohesive structures.

Remove ads

Applications

Summarize

Perspective

Digital camera images

Digital cameras generally include specialized digital image processing hardware – either dedicated chips or added circuitry on other chips – to convert the raw data from their image sensor into a color-corrected image in a standard image file format. Additional post processing techniques increase edge sharpness or color saturation to create more naturally looking images.

Film

Westworld (1973) was the first feature film to use the digital image processing to pixellate photography to simulate an android's point of view.[42] Image processing is also vastly used to produce the chroma key effect that replaces the background of actors with natural or artistic scenery.

Face detection

Face detection can be implemented with mathematical morphology, the discrete cosine transform (DCT), and horizontal projection.

General method with feature-based method

The feature-based method of face detection is using skin tone, edge detection, face shape, and feature of a face (like eyes, mouth, etc.) to achieve face detection. The skin tone, face shape, and all the unique elements that only the human face have can be described as features.

Process explanation

- Given a batch of face images, first, extract the skin tone range by sampling face images. The skin tone range is just a skin filter.

- Structural similarity index measure (SSIM) can be applied to compare images in terms of extracting the skin tone.

- Normally, HSV or RGB color spaces are suitable for the skin filter. E.g. HSV mode, the skin tone range is [0,48,50] ~ [20,255,255]

- After filtering images with skin tone, to get the face edge, morphology and DCT are used to remove noise and fill up missing skin areas.

- Opening method or closing method can be used to achieve filling up missing skin.

- DCT is to avoid the object with skin-like tone. Since human faces always have higher texture.

- Sobel operator or other operators can be applied to detect face edge.

- To position human features like eyes, using the projection and find the peak of the histogram of projection help to get the detail feature like mouth, hair, and lip.

- Projection is just projecting the image to see the high frequency which is usually the feature position.

Improvement of image quality method

Image quality can be influenced by camera vibration, over-exposure, gray level distribution too centralized, and noise, etc. For example, noise problem can be solved by smoothing method while gray level distribution problem can be improved by histogram equalization.

Smoothing method

In drawing, if there is some dissatisfied color, taking some color around dissatisfied color and averaging them. This is an easy way to think of Smoothing method.

Smoothing method can be implemented with mask and convolution. Take the small image and mask for instance as below.

image is

mask is

After convolution and smoothing, image is

Observing image[1, 1], image[1, 2], image[2, 1], and image[2, 2].

The original image pixel is 1, 4, 28, 30. After smoothing mask, the pixel becomes 9, 10, 9, 9 respectively.

new image[1, 1] = * (image[0,0]+image[0,1]+image[0,2]+image[1,0]+image[1,1]+image[1,2]+image[2,0]+image[2,1]+image[2,2])

new image[1, 1] = floor( * (2+5+6+3+1+4+1+28+30)) = 9

new image[1, 2] = floor({ * (5+6+5+1+4+6+28+30+2)) = 10

new image[2, 1] = floor( * (3+1+4+1+28+30+7+3+2)) = 9

new image[2, 2] = floor( * (1+4+6+28+30+2+3+2+2)) = 9

Gray Level Histogram method

Generally, given a gray level histogram from an image as below. Changing the histogram to uniform distribution from an image is usually what we called histogram equalization.

In discrete time, the area of gray level histogram is (see figure 1) while the area of uniform distribution is (see figure 2). It is clear that the area will not change, so .

From the uniform distribution, the probability of is while the

In continuous time, the equation is .

Moreover, based on the definition of a function, the Gray level histogram method is like finding a function that satisfies f(p)=q.

Remove ads

Challenges

- Noise and distortions: Imperfections in images due to poor lighting, limited sensors, and file compression can result in unclear images that impact accurate image conversion.

- Variability in image quality: Variations in image quality and resolution, including blurry images and incomplete details, can hinder uniform processing across a database.

- Object detection and Recognition: Identifying and recognising objects within images, especially in complex scenarios with multiple objects and occlusions, poses a significant challenge.

- Data annotation and labelling: Labelling diverse and multiple images for machine recognition is crucial for further processing accuracy, as incorrect identification can lead to unrealistic results.

- Computational resource intensity: Accessing adequate computational resources for image processing can be challenging and costly, hindering progress without sufficient resources.

Remove ads

See also

- Digital imaging

- Computer graphics

- Computer vision

- CVIPtools

- Digitizing

- Fourier transform

- Free boundary condition

- GPGPU

- Homomorphic filtering

- Image analysis

- IEEE Intelligent Transportation Systems Society

- Least-squares spectral analysis

- Medical imaging

- Multidimensional systems

- Relaxation labelling

- Remote sensing software

- Standard test image

- Superresolution

- Total variation denoising

- Machine Vision

- Bounded variation

- Radiomics

- Remote sensing

Remove ads

References

Further reading

External links

Wikiwand - on

Seamless Wikipedia browsing. On steroids.

Remove ads

,

,  ...

...

,

,  ...

...

,

,