Top Qs

Timeline

Chat

Perspective

Eigenvalues and eigenvectors

Concepts from linear algebra From Wikipedia, the free encyclopedia

Remove ads

In linear algebra, an eigenvector (/ˈaɪɡən-/ EYE-gən-) or characteristic vector is a vector that has its direction unchanged (or reversed) by a given linear transformation. More precisely, an eigenvector of a linear transformation is scaled by a constant factor when the linear transformation is applied to it: . The corresponding eigenvalue, characteristic value, or characteristic root is the multiplying factor (possibly a negative or complex number).

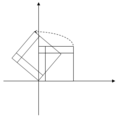

Geometrically, vectors are multi-dimensional quantities with magnitude and direction, often pictured as arrows. A linear transformation rotates, stretches, or shears the vectors upon which it acts. A linear transformation's eigenvectors are those vectors that are only stretched or shrunk, with neither rotation nor shear. The corresponding eigenvalue is the factor by which an eigenvector is stretched or shrunk. If the eigenvalue is negative, the eigenvector's direction is reversed.[1]

The eigenvectors and eigenvalues of a linear transformation serve to characterize it, and so they play important roles in all areas where linear algebra is applied, from geology to quantum mechanics. In particular, it is often the case that a system is represented by a linear transformation whose outputs are fed as inputs to the same transformation (feedback). In such an application, the largest eigenvalue is of particular importance, because it governs the long-term behavior of the system after many applications of the linear transformation, and the associated eigenvector is the steady state of the system.

Remove ads

Matrices

Summarize

Perspective

For an matrix A and a nonzero vector of length , if multiplying A by (denoted ) simply scales by a factor λ, where λ is a scalar, then is called an eigenvector of A, and λ is the corresponding eigenvalue. This relationship can be expressed as: .[2]

Given an n-dimensional vector space and a choice of basis, there is a direct correspondence between linear transformations from the vector space into itself and n-by-n square matrices. Hence, in a finite-dimensional vector space, it is equivalent to define eigenvalues and eigenvectors using either the language of linear transformations, or the language of matrices.[3][4]

Remove ads

Overview

Summarize

Perspective

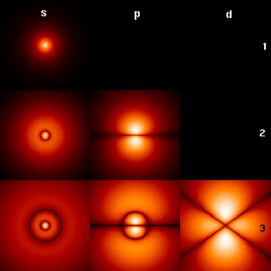

Eigenvalues and eigenvectors feature prominently in the analysis of linear transformations. The prefix eigen- is adopted from the German word eigen (cognate with the English word own) for 'proper', 'characteristic', 'own'.[5][6] Originally used to study principal axes of the rotational motion of rigid bodies, eigenvalues and eigenvectors have a wide range of applications, for example in stability analysis, vibration analysis, atomic orbitals, facial recognition, and matrix diagonalization.

In essence, an eigenvector v of a linear transformation T is a nonzero vector that, when T is applied to it, does not change direction. Applying T to the eigenvector only scales the eigenvector by the scalar value λ, called an eigenvalue. This condition can be written as the equation referred to as the eigenvalue equation or eigenequation. In general, λ may be any scalar. For example, λ may be negative, in which case the eigenvector reverses direction as part of the scaling, or it may be zero or complex.

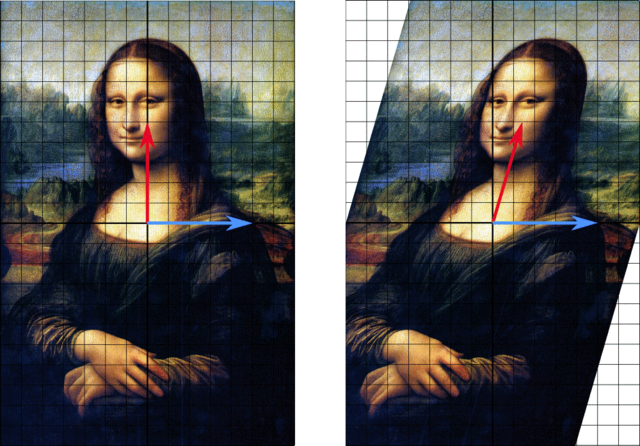

The example here, based on the Mona Lisa, provides a simple illustration. Each point on the painting can be represented as a vector pointing from the center of the painting to that point. The linear transformation in this example is called a shear mapping. Points in the top half are moved to the right, and points in the bottom half are moved to the left, proportional to how far they are from the horizontal axis that goes through the middle of the painting. The vectors pointing to each point in the original image are therefore tilted right or left, and made longer or shorter by the transformation. Points along the horizontal axis do not move at all when this transformation is applied. Therefore, any vector that points directly to the right or left with no vertical component is an eigenvector of this transformation, because the mapping does not change its direction. Moreover, these eigenvectors all have an eigenvalue equal to one, because the mapping does not change their length either.

Linear transformations can take many different forms, mapping vectors in a variety of vector spaces, so the eigenvectors can also take many forms. For example, the linear transformation could be a differential operator like , in which case the eigenvectors are functions called eigenfunctions that are scaled by that differential operator, such as Alternatively, the linear transformation could take the form of an n by n matrix, in which case the eigenvectors are n by 1 matrices. If the linear transformation is expressed in the form of an n by n matrix A, then the eigenvalue equation for a linear transformation above can be rewritten as the matrix multiplication where the eigenvector v is an n by 1 matrix. For a matrix, eigenvalues and eigenvectors can be used to decompose the matrix—for example by diagonalizing it.

Eigenvalues and eigenvectors give rise to many closely related mathematical concepts, and the prefix eigen- is applied liberally when naming them:

- The set of all eigenvectors of a linear transformation, each paired with its corresponding eigenvalue, is called the eigensystem of that transformation.[7][8]

- The set of all eigenvectors of T corresponding to the same eigenvalue, together with the zero vector, is called an eigenspace, or the characteristic space of T associated with that eigenvalue.[9]

- If a set of eigenvectors of T forms a basis of the domain of T, then this basis is called an eigenbasis.

Remove ads

History

Summarize

Perspective

Eigenvalues are often introduced in the context of linear algebra or matrix theory. Historically, however, they arose in the study of quadratic forms and differential equations.

In the 18th century, Leonhard Euler studied the rotational motion of a rigid body, and discovered the importance of the principal axes.[a] Joseph-Louis Lagrange realized that the principal axes are the eigenvectors of the inertia matrix.[10]

In the early 19th century, Augustin-Louis Cauchy saw how their work could be used to classify the quadric surfaces, and generalized it to arbitrary dimensions.[11] Cauchy also coined the term racine caractéristique (characteristic root), for what is now called eigenvalue; his term survives in characteristic equation.[b]

Later, Joseph Fourier used the work of Lagrange and Pierre-Simon Laplace to solve the heat equation by separation of variables in his 1822 treatise The Analytic Theory of Heat (Théorie analytique de la chaleur).[12] Charles-François Sturm elaborated on Fourier's ideas further, and brought them to the attention of Cauchy, who combined them with his own ideas and arrived at the fact that real symmetric matrices have real eigenvalues.[11] This was extended by Charles Hermite in 1855 to what are now called Hermitian matrices.[13]

Around the same time, Francesco Brioschi proved that the eigenvalues of orthogonal matrices lie on the unit circle,[11] and Alfred Clebsch found the corresponding result for skew-symmetric matrices.[13] Finally, Karl Weierstrass clarified an important aspect in the stability theory started by Laplace, by realizing that defective matrices can cause instability.[11]

In the meantime, Joseph Liouville studied eigenvalue problems similar to those of Sturm; the discipline that grew out of their work is now called Sturm–Liouville theory.[14] Schwarz studied the first eigenvalue of Laplace's equation on general domains towards the end of the 19th century, while Poincaré studied Poisson's equation a few years later.[15]

At the start of the 20th century, David Hilbert studied the eigenvalues of integral operators by viewing the operators as infinite matrices.[16] He was the first to use the German word eigen, which means "own",[6] to denote eigenvalues and eigenvectors in 1904,[c] though he may have been following a related usage by Hermann von Helmholtz. For some time, the standard term in English was "proper value", but the more distinctive term "eigenvalue" is the standard today.[17]

The first numerical algorithm for computing eigenvalues and eigenvectors appeared in 1929, when Richard von Mises published the power method. One of the most popular methods today, the QR algorithm, was proposed independently by John G. F. Francis[18] and Vera Kublanovskaya[19] in 1961.[20][21]

Remove ads

Eigenvalues and eigenvectors of matrices

Summarize

Perspective

Eigenvalues and eigenvectors are often introduced to students in the context of linear algebra courses focused on matrices.[22][23] Furthermore, linear transformations over a finite-dimensional vector space can be represented using matrices,[3][4] which is especially common in numerical and computational applications.[24]

Consider n-dimensional vectors that are formed as a list of n scalars, such as the three-dimensional vectors

These vectors are said to be scalar multiples of each other, or parallel or collinear, if there is a scalar λ such that

In this case, .

Now consider the linear transformation of n-dimensional vectors defined by an n by n matrix A, or where, for each row,

If it occurs that v and w are scalar multiples, that is if

| 1 |

then v is an eigenvector of the linear transformation A and the scale factor λ is the eigenvalue corresponding to that eigenvector. Equation (1) is the eigenvalue equation for the matrix A.

Equation (1) can be stated equivalently as

| 2 |

where I is the n by n identity matrix and 0 is the zero vector.

Eigenvalues and the characteristic polynomial

Equation (2) has a nonzero solution v if and only if the determinant of the matrix (A − λI) is zero. Therefore, the eigenvalues of A are values of λ that satisfy the equation

| 3 |

Using the Leibniz formula for determinants, the left-hand side of equation (3) is a polynomial function of the variable λ and the degree of this polynomial is n, the order of the matrix A. Its coefficients depend on the entries of A, except that its term of degree n is always (−1)nλn. This polynomial is called the characteristic polynomial of A. Equation (3) is called the characteristic equation or the secular equation of A.

The fundamental theorem of algebra implies that the characteristic polynomial of an n-by-n matrix A, being a polynomial of degree n, can be factored into the product of n linear terms,

| 4 |

where each λi may be real but in general is a complex number. The numbers λ1, λ2, ..., λn, which may not all have distinct values, are roots of the polynomial and are the eigenvalues of A.

As a brief example, which is described in more detail in the examples section later, consider the matrix

Taking the determinant of (A − λI), the characteristic polynomial of A is

Setting the characteristic polynomial equal to zero, it has roots at λ=1 and λ=3, which are the two eigenvalues of A. The eigenvectors corresponding to each eigenvalue can be found by solving for the components of the eigenvectors v in the equation at each eigenvalue λ. In this example, the eigenvectors are any nonzero scalar multiples of

If the entries of the matrix A are all real numbers, then the coefficients of the characteristic polynomial will also be real numbers, but the eigenvalues may still have nonzero imaginary parts. The entries of the corresponding eigenvectors therefore may also have nonzero imaginary parts. Similarly, the eigenvalues may be irrational numbers even if all the entries of A are rational numbers or even if they are all integers. However, if the entries of A are all algebraic numbers, which include the rationals, the eigenvalues must also be algebraic numbers.

The non-real roots of a real polynomial with real coefficients can be grouped into pairs of complex conjugates, namely with the two members of each pair having imaginary parts that differ only in sign and the same real part. If the degree is odd, then by the intermediate value theorem at least one of the roots is real. Therefore, any real matrix with odd order has at least one real eigenvalue, whereas a real matrix with even order may not have any real eigenvalues. The eigenvectors associated with these complex eigenvalues are also complex and also appear in complex conjugate pairs.

Spectrum of a matrix

The spectrum of a matrix is the list of eigenvalues, repeated according to multiplicity; in an alternative notation the set of eigenvalues with their multiplicities.

An important quantity associated with the spectrum is the maximum absolute value of any eigenvalue. This is known as the spectral radius of the matrix.

Algebraic multiplicity

Let λi be an eigenvalue of an n by n matrix A. The algebraic multiplicity μA(λi) of the eigenvalue is its multiplicity as a root of the characteristic polynomial, that is, the largest integer k such that (λ − λi)k divides evenly that polynomial.[9][25][26]

Suppose a matrix A has dimension n and d ≤ n distinct eigenvalues. Whereas equation (4) factors the characteristic polynomial of A into the product of n linear terms with some terms potentially repeating, the characteristic polynomial can also be written as the product of d terms each corresponding to a distinct eigenvalue and raised to the power of the algebraic multiplicity,

If d = n then the right-hand side is the product of n linear terms and this is the same as equation (4). The size of each eigenvalue's algebraic multiplicity is related to the dimension n as

If μA(λi) = 1, then λi is said to be a simple eigenvalue.[26] If μA(λi) equals the geometric multiplicity of λi, γA(λi), defined in the next section, then λi is said to be a semisimple eigenvalue.

Eigenspaces, geometric multiplicity, and the eigenbasis for matrices

Given a particular eigenvalue λ of the n by n matrix A, define the set E to be all vectors v that satisfy equation (2),

On one hand, this set is precisely the kernel or nullspace of the matrix (A − λI). On the other hand, by definition, any nonzero vector that satisfies this condition is an eigenvector of A associated with λ. So, the set E is the union of the zero vector with the set of all eigenvectors of A associated with λ, and E equals the nullspace of (A − λI). E is called the eigenspace or characteristic space of A associated with λ.[27][9] In general λ is a complex number and the eigenvectors are complex n by 1 matrices. A property of the nullspace is that it is a linear subspace, so E is a linear subspace of .

Because the eigenspace E is a linear subspace, it is closed under addition. That is, if two vectors u and v belong to the set E, written u, v ∈ E, then (u + v) ∈ E or equivalently A(u + v) = λ(u + v). This can be checked using the distributive property of matrix multiplication. Similarly, because E is a linear subspace, it is closed under scalar multiplication. That is, if v ∈ E and α is a complex number, (αv) ∈ E or equivalently A(αv) = λ(αv). This can be checked by noting that multiplication of complex matrices by complex numbers is commutative. As long as u + v and αv are not zero, they are also eigenvectors of A associated with λ.

The dimension of the eigenspace E associated with λ, or equivalently the maximum number of linearly independent eigenvectors associated with λ, is referred to as the eigenvalue's geometric multiplicity . Because E is also the nullspace of (A − λI), the geometric multiplicity of λ is the dimension of the nullspace of (A − λI), also called the nullity of (A − λI), which relates to the dimension and rank of (A − λI) as

Because of the definition of eigenvalues and eigenvectors, an eigenvalue's geometric multiplicity must be at least one, that is, each eigenvalue has at least one associated eigenvector. Furthermore, an eigenvalue's geometric multiplicity cannot exceed its algebraic multiplicity. Additionally, recall that an eigenvalue's algebraic multiplicity cannot exceed n.

To prove the inequality , consider how the definition of geometric multiplicity implies the existence of orthonormal eigenvectors , such that . We can therefore find a (unitary) matrix V whose first columns are these eigenvectors, and whose remaining columns can be any orthonormal set of vectors orthogonal to these eigenvectors of A. Then V has full rank and is therefore invertible. Evaluating , we get a matrix whose top left block is the diagonal matrix . This can be seen by evaluating what the left-hand side does to the first column basis vectors. By reorganizing and adding on both sides, we get since I commutes with V. In other words, is similar to , and . But from the definition of D, we know that contains a factor , which means that the algebraic multiplicity of must satisfy .

Suppose A has distinct eigenvalues , where the geometric multiplicity of is . The total geometric multiplicity of A, is the dimension of the sum of all the eigenspaces of A's eigenvalues, or equivalently the maximum number of linearly independent eigenvectors of A. If , then

- The direct sum of the eigenspaces of all of A's eigenvalues is the entire vector space .

- A basis of can be formed from n linearly independent eigenvectors of A; such a basis is called an eigenbasis

- Any vector in can be written as a linear combination of eigenvectors of A.

Additional properties

Let be an arbitrary matrix of complex numbers with eigenvalues . Each eigenvalue appears times in this list, where is the eigenvalue's algebraic multiplicity. The following are properties of this matrix and its eigenvalues:

- The trace of , defined as the sum of its diagonal elements, is also the sum of all eigenvalues,[28][29][30]

- The determinant of is the product of all its eigenvalues,[28][31][32]

- The eigenvalues of the th power of ; i.e., the eigenvalues of , for any positive integer , are .

- The matrix is invertible if and only if every eigenvalue is nonzero.

- If is invertible, then the eigenvalues of are and each eigenvalue's geometric multiplicity coincides. Moreover, since the characteristic polynomial of the inverse is the reciprocal polynomial of the original, the eigenvalues share the same algebraic multiplicity.

- If is equal to its conjugate transpose , or equivalently if is Hermitian, then every eigenvalue is real. The same is true of any symmetric real matrix.

- If is not only Hermitian but also positive-definite, positive-semidefinite, negative-definite, or negative-semidefinite, then every eigenvalue is positive, non-negative, negative, or non-positive, respectively.

- If is unitary, every eigenvalue has absolute value .

- If is a matrix and are its eigenvalues, then the eigenvalues of matrix (where is the identity matrix) are . Moreover, if , the eigenvalues of are . More generally, for a polynomial the eigenvalues of matrix are .

Left and right eigenvectors

Many disciplines traditionally represent vectors as matrices with a single column rather than as matrices with a single row. For that reason, the word "eigenvector" in the context of matrices almost always refers to a right eigenvector, namely a column vector that right multiplies the matrix in the defining equation, equation (1),

The eigenvalue and eigenvector problem can also be defined for row vectors that left multiply matrix . In this formulation, the defining equation is

where is a scalar and is a matrix. Any row vector satisfying this equation is called a left eigenvector of and is its associated eigenvalue. Taking the transpose of this equation,

Comparing this equation to equation (1), it follows immediately that a left eigenvector of is the same as the transpose of a right eigenvector of , with the same eigenvalue. Furthermore, since the characteristic polynomial of is the same as the characteristic polynomial of , the left and right eigenvectors of are associated with the same eigenvalues.

Diagonalization and the eigendecomposition

Suppose the eigenvectors of A form a basis, or equivalently A has n linearly independent eigenvectors v1, v2, ..., vn with associated eigenvalues λ1, λ2, ..., λn. The eigenvalues need not be distinct. Define a square matrix Q whose columns are the n linearly independent eigenvectors of A,

Since each column of Q is an eigenvector of A, right multiplying A by Q scales each column of Q by its associated eigenvalue,

With this in mind, define a diagonal matrix Λ where each diagonal element Λii is the eigenvalue associated with the ith column of Q. Then

Because the columns of Q are linearly independent, Q is invertible. Right multiplying both sides of the equation by Q−1,

or by instead left multiplying both sides by Q−1,

A can therefore be decomposed into a matrix composed of its eigenvectors, a diagonal matrix with its eigenvalues along the diagonal, and the inverse of the matrix of eigenvectors. This is called the eigendecomposition and it is a similarity transformation. Such a matrix A is said to be similar to the diagonal matrix Λ or diagonalizable. The matrix Q is the change of basis matrix of the similarity transformation. Essentially, the matrices A and Λ represent the same linear transformation expressed in two different bases. The eigenvectors are used as the basis when representing the linear transformation as Λ.

Conversely, suppose a matrix A is diagonalizable. Let P be a non-singular square matrix such that P−1AP is some diagonal matrix D. Left multiplying both by P, AP = PD. Each column of P must therefore be an eigenvector of A whose eigenvalue is the corresponding diagonal element of D. Since the columns of P must be linearly independent for P to be invertible, there exist n linearly independent eigenvectors of A. It then follows that the eigenvectors of A form a basis if and only if A is diagonalizable.

A matrix that is not diagonalizable is said to be defective. For defective matrices, the notion of eigenvectors generalizes to generalized eigenvectors and the diagonal matrix of eigenvalues generalizes to the Jordan normal form. Over an algebraically closed field, any matrix A has a Jordan normal form and therefore admits a basis of generalized eigenvectors and a decomposition into generalized eigenspaces.

Variational characterization

In the Hermitian case, eigenvalues can be given a variational characterization. The largest eigenvalue of is the maximum value of the quadratic form . A value of that realizes that maximum is an eigenvector.

Matrix examples

Two-dimensional matrix example

Consider the matrix

The figure on the right shows the effect of this transformation on point coordinates in the plane. The eigenvectors v of this transformation satisfy equation (1), and the values of λ for which the determinant of the matrix (A − λI) equals zero are the eigenvalues.

Taking the determinant to find characteristic polynomial of A,

Setting the characteristic polynomial equal to zero, it has roots at λ=1 and λ=3, which are the two eigenvalues of A.

For λ=1, equation (2) becomes,

Any nonzero vector with v1 = −v2 solves this equation. Therefore, is an eigenvector of A corresponding to λ = 1, as is any scalar multiple of this vector.

For λ=3, equation (2) becomes

Any nonzero vector with v1 = v2 solves this equation. Therefore,

is an eigenvector of A corresponding to λ = 3, as is any scalar multiple of this vector.

Thus, the vectors vλ=1 and vλ=3 are eigenvectors of A associated with the eigenvalues λ=1 and λ=3, respectively.

Three-dimensional matrix example

Consider the matrix

The characteristic polynomial of A is

The roots of the characteristic polynomial are 2, 1, and 11, which are the only three eigenvalues of A. These eigenvalues correspond to the eigenvectors , , and , or any nonzero multiple thereof.

Three-dimensional matrix example with complex eigenvalues

Consider the cyclic permutation matrix

This matrix shifts the coordinates of the vector up by one position and moves the first coordinate to the bottom. Its characteristic polynomial is 1 − λ3, whose roots are where is an imaginary unit with .

For the real eigenvalue λ1 = 1, any vector with three equal nonzero entries is an eigenvector. For example,

For the complex conjugate pair of imaginary eigenvalues,

Then and

Therefore, the other two eigenvectors of A are complex and are and with eigenvalues λ2 and λ3, respectively. The two complex eigenvectors also appear in a complex conjugate pair,

Diagonal matrix example

Matrices with entries only along the main diagonal are called diagonal matrices. The eigenvalues of a diagonal matrix are the diagonal elements themselves. Consider the matrix

The characteristic polynomial of A is

which has the roots λ1 = 1, λ2 = 2, and λ3 = 3. These roots are the diagonal elements as well as the eigenvalues of A.

Each diagonal element corresponds to an eigenvector whose only nonzero component is in the same row as that diagonal element. In the example, the eigenvalues correspond to the eigenvectors,

respectively, as well as scalar multiples of these vectors.

Triangular matrix example

A matrix whose elements above the main diagonal are all zero is called a lower triangular matrix, while a matrix whose elements below the main diagonal are all zero is called an upper triangular matrix. As with diagonal matrices, the eigenvalues of triangular matrices are the elements of the main diagonal.

Consider the lower triangular matrix,

The characteristic polynomial of A is

which has the roots λ1 = 1, λ2 = 2, and λ3 = 3. These roots are the diagonal elements as well as the eigenvalues of A.

These eigenvalues correspond to the eigenvectors,

respectively, as well as scalar multiples of these vectors.

Matrix with repeated eigenvalues example

As in the previous example, the lower triangular matrix has a characteristic polynomial that is the product of its diagonal elements,

The roots of this polynomial, and hence the eigenvalues, are 2 and 3. The algebraic multiplicity of each eigenvalue is 2; in other words they are both double roots. The sum of the algebraic multiplicities of all distinct eigenvalues is μA = 4 = n, the order of the characteristic polynomial and the dimension of A.

On the other hand, the geometric multiplicity of the eigenvalue 2 is only 1, because its eigenspace is spanned by just one vector and is therefore 1-dimensional. Similarly, the geometric multiplicity of the eigenvalue 3 is 1 because its eigenspace is spanned by just one vector . The total geometric multiplicity γA is 2, which is the smallest it could be for a matrix with two distinct eigenvalues. Geometric multiplicities are defined in a later section.

Eigenvector-eigenvalue identity

For a Hermitian matrix A, the norm squared of the α-th component of a normalized eigenvector can be calculated using only the matrix eigenvalues and the eigenvalues of the corresponding minor matrix, where is the submatrix formed by removing the α-th row and column from the original matrix.[33][34][35] This identity also extends to diagonalizable matrices, and has been rediscovered many times in the literature.[34][36]

Remove ads

Eigenvalues and eigenfunctions of differential operators

Summarize

Perspective

The definitions of eigenvalue and eigenvectors of a linear transformation T remains valid even if the underlying vector space is an infinite-dimensional Hilbert or Banach space. A widely used class of linear transformations acting on infinite-dimensional spaces are the differential operators on function spaces. Let D be a linear differential operator on the space C∞ of infinitely differentiable real functions of a real argument t. The eigenvalue equation for D is the differential equation

The functions that satisfy this equation are eigenvectors of D and are commonly called eigenfunctions.

Derivative operator example

Consider the derivative operator with eigenvalue equation

This differential equation can be solved by multiplying both sides by dt/f(t) and integrating. Its solution, the exponential function is the eigenfunction of the derivative operator. In this case the eigenfunction is itself a function of its associated eigenvalue. In particular, for λ = 0 the eigenfunction f(t) is a constant.

The main eigenfunction article gives other examples.

Remove ads

General definition

Summarize

Perspective

The concept of eigenvalues and eigenvectors extends naturally to arbitrary linear transformations on arbitrary vector spaces. Let V be any vector space over some field K of scalars, and let T be a linear transformation mapping V into V,

We say that a nonzero vector v ∈ V is an eigenvector of T if and only if there exists a scalar λ ∈ K such that

| 5 |

This equation is called the eigenvalue equation for T, and the scalar λ is the eigenvalue of T corresponding to the eigenvector v. T(v) is the result of applying the transformation T to the vector v, while λv is the product of the scalar λ with v.[37][38]

Eigenspaces, geometric multiplicity, and the eigenbasis

Given an eigenvalue λ, consider the set

which is the union of the zero vector with the set of all eigenvectors associated with λ. E is called the eigenspace or characteristic space of T associated with λ.[39]

By definition of a linear transformation,

for x, y ∈ V and α ∈ K. Therefore, if u and v are eigenvectors of T associated with eigenvalue λ, namely u, v ∈ E, then

So, both u + v and αv are either zero or eigenvectors of T associated with λ, namely u + v, αv ∈ E, and E is closed under addition and scalar multiplication. The eigenspace E associated with λ is therefore a linear subspace of V.[40] If that subspace has dimension 1, it is sometimes called an eigenline.[41]

The geometric multiplicity γT(λ) of an eigenvalue λ is the dimension of the eigenspace associated with λ, i.e., the maximum number of linearly independent eigenvectors associated with that eigenvalue.[9][26][42] By the definition of eigenvalues and eigenvectors, γT(λ) ≥ 1 because every eigenvalue has at least one eigenvector.

The eigenspaces of T always form a direct sum. As a consequence, eigenvectors of different eigenvalues are always linearly independent. Therefore, the sum of the dimensions of the eigenspaces cannot exceed the dimension n of the vector space on which T operates, and there cannot be more than n distinct eigenvalues.[d]

Any subspace spanned by eigenvectors of T is an invariant subspace of T, and the restriction of T to such a subspace is diagonalizable. Moreover, if the entire vector space V can be spanned by the eigenvectors of T, or equivalently if the direct sum of the eigenspaces associated with all the eigenvalues of T is the entire vector space V, then a basis of V called an eigenbasis can be formed from linearly independent eigenvectors of T. When T admits an eigenbasis, T is diagonalizable.

Spectral theory

If λ is an eigenvalue of T, then the operator (T − λI) is not one-to-one, and therefore its inverse (T − λI)−1 does not exist. The converse is true for finite-dimensional vector spaces, but not for infinite-dimensional vector spaces. In general, the operator (T − λI) may not have an inverse even if λ is not an eigenvalue.

For this reason, in functional analysis eigenvalues can be generalized to the spectrum of a linear operator T as the set of all scalars λ for which the operator (T − λI) has no bounded inverse. The spectrum of an operator always contains all its eigenvalues but is not limited to them.

Associative algebras and representation theory

One can generalize the algebraic object that is acting on the vector space, replacing a single operator acting on a vector space with an algebra representation – an associative algebra acting on a module. The study of such actions is the field of representation theory.

The representation-theoretical concept of weight is an analog of eigenvalues, while weight vectors and weight spaces are the analogs of eigenvectors and eigenspaces, respectively.

Hecke eigensheaf is a tensor-multiple of itself and is considered in Langlands correspondence.

Remove ads

Dynamic equations

Summarize

Perspective

The simplest difference equations have the form

The solution of this equation for x in terms of t is found by using its characteristic equation

which can be found by stacking into matrix form a set of equations consisting of the above difference equation and the k – 1 equations giving a k-dimensional system of the first order in the stacked variable vector in terms of its once-lagged value, and taking the characteristic equation of this system's matrix. This equation gives k characteristic roots for use in the solution equation

A similar procedure is used for solving a differential equation of the form

Remove ads

Calculation

Summarize

Perspective

The calculation of eigenvalues and eigenvectors is a topic where theory, as presented in elementary linear algebra textbooks, is often very far from practice.

Classical method

The classical method is to first find the eigenvalues, and then calculate the eigenvectors for each eigenvalue. It is in several ways poorly suited for non-exact arithmetics such as floating-point.

Eigenvalues

The eigenvalues of a matrix can be determined by finding the roots of the characteristic polynomial. This is easy for matrices, but the difficulty increases rapidly with the size of the matrix.

In theory, the coefficients of the characteristic polynomial can be computed exactly, since they are sums of products of matrix elements; and there are algorithms that can find all the roots of a polynomial of arbitrary degree to any required accuracy.[43] However, this approach is not viable in practice because the coefficients would be contaminated by unavoidable round-off errors, and the roots of a polynomial can be an extremely sensitive function of the coefficients (as exemplified by Wilkinson's polynomial).[43] Even for matrices whose elements are integers the calculation becomes nontrivial, because the sums are very long; the constant term is the determinant, which for an matrix is a sum of different products.[e]

Explicit algebraic formulas for the roots of a polynomial exist only if the degree is 4 or less. According to the Abel–Ruffini theorem there is no general, explicit and exact algebraic formula for the roots of a polynomial with degree 5 or more. (Generality matters because any polynomial with degree is the characteristic polynomial of some companion matrix of order .) Therefore, for matrices of order 5 or more, the eigenvalues and eigenvectors cannot be obtained by an explicit algebraic formula, and must therefore be computed by approximate numerical methods. Even the exact formula for the roots of a degree 3 polynomial is numerically impractical.

Eigenvectors

Once the (exact) value of an eigenvalue is known, the corresponding eigenvectors can be found by finding nonzero solutions of the eigenvalue equation, that becomes a system of linear equations with known coefficients. For example, once it is known that 6 is an eigenvalue of the matrix

we can find its eigenvectors by solving the equation , that is

This matrix equation is equivalent to two linear equations that is

Both equations reduce to the single linear equation . Therefore, any vector of the form , for any nonzero real number , is an eigenvector of with eigenvalue .

The matrix above has another eigenvalue . A similar calculation shows that the corresponding eigenvectors are the nonzero solutions of , that is, any vector of the form , for any nonzero real number .

Simple iterative methods

The converse approach, of first seeking the eigenvectors and then determining each eigenvalue from its eigenvector, turns out to be far more tractable for computers. The easiest algorithm here consists of picking an arbitrary starting vector and then repeatedly multiplying it with the matrix (optionally normalizing the vector to keep its elements of reasonable size); this makes the vector converge towards an eigenvector. A variation is to instead multiply the vector by ; this causes it to converge to an eigenvector of the eigenvalue closest to .

If is (a good approximation of) an eigenvector of , then the corresponding eigenvalue can be computed as

where denotes the conjugate transpose of .

Modern methods

Efficient, accurate methods to compute eigenvalues and eigenvectors of arbitrary matrices were not known until the QR algorithm was designed in 1961.[43] Combining the Householder transformation with the LU decomposition results in an algorithm with better convergence than the QR algorithm.[citation needed] For large Hermitian sparse matrices, the Lanczos algorithm is one example of an efficient iterative method to compute eigenvalues and eigenvectors, among several other possibilities.[43]

Most numeric methods that compute the eigenvalues of a matrix also determine a set of corresponding eigenvectors as a by-product of the computation, although sometimes implementors choose to discard the eigenvector information as soon as it is no longer needed.

Remove ads

Applications

Summarize

Perspective

Geometric transformations

Eigenvectors and eigenvalues can be useful for understanding linear transformations of geometric shapes. The following table presents some example transformations in the plane along with their 2×2 matrices, eigenvalues, and eigenvectors.

The characteristic equation for a rotation is a quadratic equation with discriminant , which is a negative number whenever θ is not an integer multiple of 180°. Therefore, except for these special cases, the two eigenvalues are complex numbers, ; and all eigenvectors have non-real entries. Indeed, except for those special cases, a rotation changes the direction of every nonzero vector in the plane.

A linear transformation that takes a square to a rectangle of the same area (a squeeze mapping) has reciprocal eigenvalues.

Principal component analysis

The eigendecomposition of a symmetric positive semidefinite (PSD) matrix yields an orthogonal basis of eigenvectors, each of which has a nonnegative eigenvalue. The orthogonal decomposition of a PSD matrix is used in multivariate analysis, where the sample covariance matrices are PSD. This orthogonal decomposition is called principal component analysis (PCA) in statistics. PCA studies linear relations among variables. PCA is performed on the covariance matrix or the correlation matrix (in which each variable is scaled to have its sample variance equal to one). For the covariance or correlation matrix, the eigenvectors correspond to principal components and the eigenvalues to the variance explained by the principal components. Principal component analysis of the correlation matrix provides an orthogonal basis for the space of the observed data: In this basis, the largest eigenvalues correspond to the principal components that are associated with most of the covariability among a number of observed data.

Principal component analysis is used as a means of dimensionality reduction in the study of large data sets, such as those encountered in bioinformatics. In Q methodology, the eigenvalues of the correlation matrix determine the Q-methodologist's judgment of practical significance (which differs from the statistical significance of hypothesis testing; cf. criteria for determining the number of factors). More generally, principal component analysis can be used as a method of factor analysis in structural equation modeling.

Graphs

In spectral graph theory, an eigenvalue of a graph is defined as an eigenvalue of the graph's adjacency matrix , or (increasingly) of the graph's Laplacian matrix due to its discrete Laplace operator, which is either (sometimes called the combinatorial Laplacian) or (sometimes called the normalized Laplacian), where is a diagonal matrix with equal to the degree of vertex , and in , the th diagonal entry is . The th principal eigenvector of a graph is defined as either the eigenvector corresponding to the th largest or th smallest eigenvalue of the Laplacian. The first principal eigenvector of the graph is also referred to merely as the principal eigenvector.

The principal eigenvector is used to measure the centrality of its vertices. An example is Google's PageRank algorithm. The principal eigenvector of a modified adjacency matrix of the World Wide Web graph gives the page ranks as its components. This vector corresponds to the stationary distribution of the Markov chain represented by the row-normalized adjacency matrix; however, the adjacency matrix must first be modified to ensure a stationary distribution exists. The second smallest eigenvector can be used to partition the graph into clusters, via spectral clustering. Other methods are also available for clustering.

Markov chains

A Markov chain is represented by a matrix whose entries are the transition probabilities between states of a system. In particular the entries are non-negative, and every row of the matrix sums to one, being the sum of probabilities of transitions from one state to some other state of the system. The Perron–Frobenius theorem gives sufficient conditions for a Markov chain to have a unique dominant eigenvalue, which governs the convergence of the system to a steady state.

Vibration analysis

Eigenvalue problems occur naturally in the vibration analysis of mechanical structures with many degrees of freedom. The eigenvalues are the natural frequencies (or eigenfrequencies) of vibration, and the eigenvectors are the shapes of these vibrational modes. In particular, undamped vibration is governed by or

That is, acceleration is proportional to position (i.e., we expect to be sinusoidal in time).

In dimensions, becomes a mass matrix and a stiffness matrix. Admissible solutions are then a linear combination of solutions to the generalized eigenvalue problem where is the eigenvalue and is the (imaginary) angular frequency. The principal vibration modes are different from the principal compliance modes, which are the eigenvectors of alone. Furthermore, damped vibration, governed by leads to a so-called quadratic eigenvalue problem,

This can be reduced to a generalized eigenvalue problem by algebraic manipulation at the cost of solving a larger system.

The orthogonality properties of the eigenvectors allows decoupling of the differential equations so that the system can be represented as linear summation of the eigenvectors. The eigenvalue problem of complex structures is often solved using finite element analysis, but neatly generalize the solution to scalar-valued vibration problems.

Tensor of moment of inertia

In mechanics, the eigenvectors of the moment of inertia tensor define the principal axes of a rigid body. The tensor of moment of inertia is a key quantity required to determine the rotation of a rigid body around its center of mass.

Stress tensor

In solid mechanics, the stress tensor is symmetric and so can be decomposed into a diagonal tensor with the eigenvalues on the diagonal and eigenvectors as a basis. Because it is diagonal, in this orientation, the stress tensor has no shear components; the components it does have are the principal components.

Schrödinger equation

An example of an eigenvalue equation where the transformation is represented in terms of a differential operator is the time-independent Schrödinger equation in quantum mechanics:

where , the Hamiltonian, is a second-order differential operator and , the wavefunction, is one of its eigenfunctions corresponding to the eigenvalue , interpreted as its energy.

However, in the case where one is interested only in the bound state solutions of the Schrödinger equation, one looks for within the space of square integrable functions. Since this space is a Hilbert space with a well-defined scalar product, one can introduce a basis set in which and can be represented as a one-dimensional array (i.e., a vector) and a matrix respectively. This allows one to represent the Schrödinger equation in a matrix form.

The bra–ket notation is often used in this context. A vector, which represents a state of the system, in the Hilbert space of square integrable functions is represented by . In this notation, the Schrödinger equation is:

where is an eigenstate of and represents the eigenvalue. is an observable self-adjoint operator, the infinite-dimensional analog of Hermitian matrices. As in the matrix case, in the equation above is understood to be the vector obtained by application of the transformation to .

Wave transport

Light, acoustic waves, and microwaves are randomly scattered numerous times when traversing a static disordered system. Even though multiple scattering repeatedly randomizes the waves, ultimately coherent wave transport through the system is a deterministic process which can be described by a field transmission matrix .[44][45] The eigenvectors of the transmission operator form a set of disorder-specific input wavefronts which enable waves to couple into the disordered system's eigenchannels: the independent pathways waves can travel through the system. The eigenvalues, , of correspond to the intensity transmittance associated with each eigenchannel. One of the remarkable properties of the transmission operator of diffusive systems is their bimodal eigenvalue distribution with and .[45] Furthermore, one of the striking properties of open eigenchannels, beyond the perfect transmittance, is the statistically robust spatial profile of the eigenchannels.[46]

Molecular orbitals

In quantum mechanics, and in particular in atomic and molecular physics, within the Hartree–Fock theory, the atomic and molecular orbitals can be defined by the eigenvectors of the Fock operator. The corresponding eigenvalues are interpreted as ionization potentials via Koopmans' theorem. In this case, the term eigenvector is used in a somewhat more general meaning, since the Fock operator is explicitly dependent on the orbitals and their eigenvalues. Thus, if one wants to underline this aspect, one speaks of nonlinear eigenvalue problems. Such equations are usually solved by an iteration procedure, called in this case self-consistent field method. In quantum chemistry, one often represents the Hartree–Fock equation in a non-orthogonal basis set. This particular representation is a generalized eigenvalue problem called Roothaan equations.

Geology and glaciology

This section may be too technical for most readers to understand. (December 2023) |

In geology, especially in the study of glacial till, eigenvectors and eigenvalues are used as a method by which a mass of information of a clast's fabric can be summarized in a 3-D space by six numbers. In the field, a geologist may collect such data for hundreds or thousands of clasts in a soil sample, which can be compared graphically or as a stereographic projection. Graphically, many geologists use a Tri-Plot (Sneed and Folk) diagram,.[47][48] A stereographic projection projects 3-dimensional spaces onto a two-dimensional plane. A type of stereographic projection is Wulff Net, which is commonly used in crystallography to create stereograms.[49]

The output for the orientation tensor is in the three orthogonal (perpendicular) axes of space. The three eigenvectors are ordered by their eigenvalues ;[50] then is the primary orientation/dip of clast, is the secondary and is the tertiary, in terms of strength. The clast orientation is defined as the direction of the eigenvector, on a compass rose of 360°. Dip is measured as the eigenvalue, the modulus of the tensor: this is valued from 0° (no dip) to 90° (vertical). The relative values of , , and are dictated by the nature of the sediment's fabric. If , the fabric is said to be isotropic. If , the fabric is said to be planar. If , the fabric is said to be linear.[51]

Basic reproduction number

The basic reproduction number () is a fundamental number in the study of how infectious diseases spread. If one infectious person is put into a population of completely susceptible people, then is the average number of people that one typical infectious person will infect. The generation time of an infection is the time, , from one person becoming infected to the next person becoming infected. In a heterogeneous population, the next generation matrix defines how many people in the population will become infected after time has passed. The value is then the largest eigenvalue of the next generation matrix.[52][53]

Eigenfaces

In image processing, processed images of faces can be seen as vectors whose components are the brightnesses of each pixel.[54] The dimension of this vector space is the number of pixels. The eigenvectors of the covariance matrix associated with a large set of normalized pictures of faces are called eigenfaces; this is an example of principal component analysis. They are very useful for expressing any face image as a linear combination of some of them. In the facial recognition branch of biometrics, eigenfaces provide a means of applying data compression to faces for identification purposes. Research related to eigen vision systems determining hand gestures has also been made.

Similar to this concept, eigenvoices represent the general direction of variability in human pronunciations of a particular utterance, such as a word in a language. Based on a linear combination of such eigenvoices, a new voice pronunciation of the word can be constructed. These concepts have been found useful in automatic speech recognition systems for speaker adaptation.

Remove ads

See also

Notes

Sources

Further reading

External links

Wikiwand - on

Seamless Wikipedia browsing. On steroids.

Remove ads

![{\displaystyle \left[{\begin{smallmatrix}2&1\\1&2\end{smallmatrix}}\right]}](http://wikimedia.org/api/rest_v1/media/math/render/svg/838a30dc9d065ec434dff490bd84061ed569db3b)

![{\displaystyle {\begin{aligned}\det(A-\lambda I)&=\left|{\begin{bmatrix}2&1\\1&2\end{bmatrix}}-\lambda {\begin{bmatrix}1&0\\0&1\end{bmatrix}}\right|={\begin{vmatrix}2-\lambda &1\\1&2-\lambda \end{vmatrix}}\\[6pt]&=3-4\lambda +\lambda ^{2}\\[6pt]&=(\lambda -3)(\lambda -1).\end{aligned}}}](http://wikimedia.org/api/rest_v1/media/math/render/svg/852afe30ae1c99b2f2ff91b62e226d28cef2609a)

![{\displaystyle {\begin{aligned}\det(A-\lambda I)&=\left|{\begin{bmatrix}2&0&0\\0&3&4\\0&4&9\end{bmatrix}}-\lambda {\begin{bmatrix}1&0&0\\0&1&0\\0&0&1\end{bmatrix}}\right|={\begin{vmatrix}2-\lambda &0&0\\0&3-\lambda &4\\0&4&9-\lambda \end{vmatrix}},\\[6pt]&=(2-\lambda ){\bigl [}(3-\lambda )(9-\lambda )-16{\bigr ]}=-\lambda ^{3}+14\lambda ^{2}-35\lambda +22.\end{aligned}}}](http://wikimedia.org/api/rest_v1/media/math/render/svg/bdfec3c58ac4306d8cc19110ac4b2b5bfbea234e)

,

,  ...

...