Top Qs

Timeline

Chat

Perspective

Filter bubble

Intellectual isolation through internet algorithms From Wikipedia, the free encyclopedia

Remove ads

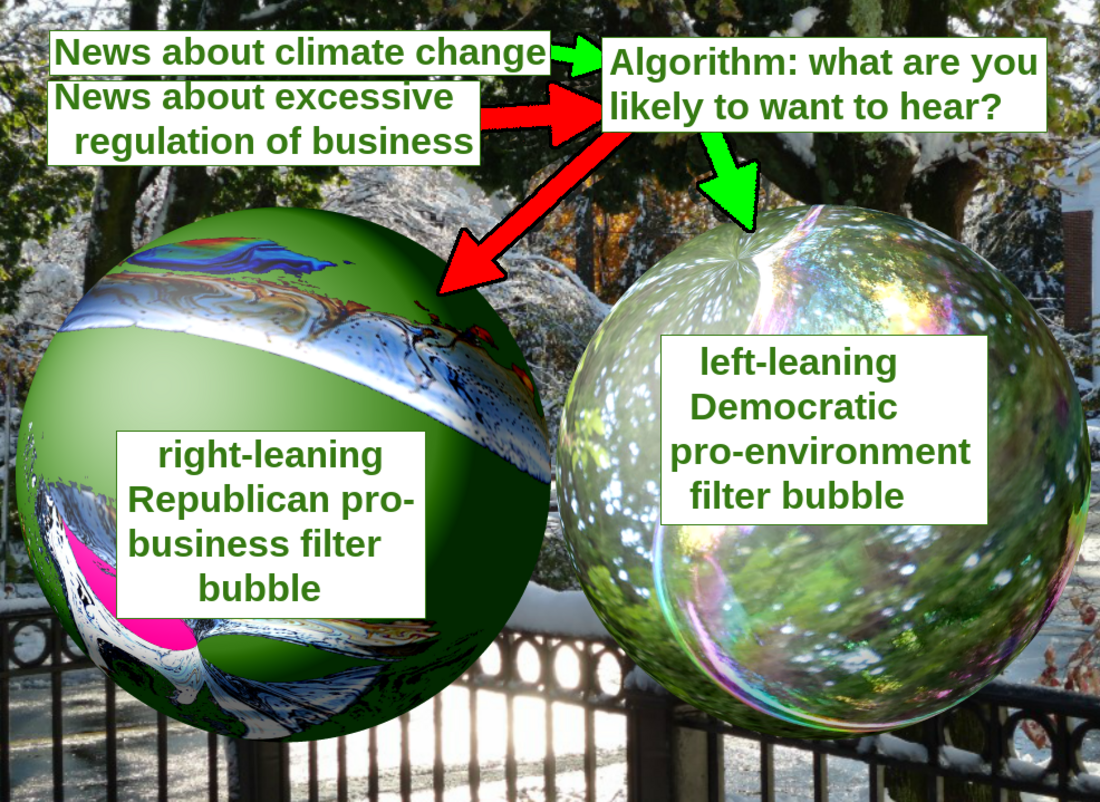

A filter bubble or ideological frame is a state of intellectual isolation[1] that can result from personalized searches, recommendation systems, and algorithmic curation. The search results are based on information about the user, such as their location, past click-behavior, and search history.[2] Consequently, users become separated from information that disagrees with their viewpoints, effectively isolating them in their own cultural or ideological bubbles, resulting in a limited and customized view of the world.[3] The choices made by these algorithms are only sometimes transparent.[4] Prime examples include Google Personalized Search results and Facebook's personalized news-stream.

However, there are conflicting reports about the extent to which personalized filtering happens and whether such activity is beneficial or harmful, with various studies producing inconclusive results.

The term filter bubble was coined by internet activist Eli Pariser circa 2010. In Pariser's influential book under the same name, The Filter Bubble (2011), it was predicted that individualized personalization by algorithmic filtering would lead to intellectual isolation and social fragmentation.[5] The bubble effect may have negative implications for civic discourse, according to Pariser, but contrasting views regard the effect as minimal[6] and addressable.[7] According to Pariser, users get less exposure to conflicting viewpoints and are isolated intellectually in their informational bubble.[8] He related an example in which one user searched Google for "BP" and got investment news about BP, while another searcher got information about the Deepwater Horizon oil spill, noting that the two search results pages were "strikingly different" despite use of the same key words.[8][9][10][6] The results of the U.S. presidential election in 2016 have been associated with the influence of social media platforms such as Twitter and Facebook,[11] and as a result have called into question the effects of the "filter bubble" phenomenon on user exposure to fake news and echo chambers,[12] spurring new interest in the term,[13] with many concerned that the phenomenon may harm democracy and well-being by making the effects of misinformation worse.[14][15][13][16][17][18]

Remove ads

Concept

Summarize

Perspective

Pariser defined his concept of a filter bubble in more formal terms as "that personal ecosystem of information that's been catered by these algorithms."[8] An internet user's past browsing and search history is built up over time when they indicate interest in topics by "clicking links, viewing friends, putting movies in [their] queue, reading news stories," and so forth.[19] An internet firm then uses this information to target advertising to the user, or make certain types of information appear more prominently in search results pages.[19]

This process is not random, as it operates under a three-step process, per Pariser, who states, "First, you figure out who people are and what they like. Then, you provide them with content and services that best fit them. Finally, you tune in to get the fit just right. Your identity shapes your media."[20] Pariser also reports:

According to one Wall Street Journal study, the top fifty Internet sites, from CNN to Yahoo to MSN, install an average of 64 data-laden cookies and personal tracking beacons. Search for a word like "depression" on Dictionary.com, and the site installs up to 223 tracking cookies and beacons on your computer so that other Web sites can target you with antidepressants. Share an article about cooking on ABC News, and you may be chased around the Web by ads for Teflon-coated pots. Open—even for an instant—a page listing signs that your spouse may be cheating and prepare to be haunted by DNA paternity-test ads.[21]

Accessing the data of link clicks displayed through site traffic measurements determines that filter bubbles can be collective or individual.[22]

As of 2011, one engineer had told Pariser that Google looked at 57 different pieces of data to personally tailor a user's search results, including non-cookie data such as the type of computer being used and the user's physical location.[23]

Pariser's idea of the filter bubble was popularized after the TED talk in May 2011, in which he gave examples of how filter bubbles work and where they can be seen. In a test seeking to demonstrate the filter bubble effect, Pariser asked several friends to search for the word "Egypt" on Google and send him the results. Comparing two of the friends' first pages of results, while there was overlap between them on topics like news and travel, one friend's results prominently included links to information on the then-ongoing Egyptian revolution of 2011, while the other friend's first page of results did not include such links.[24]

In The Filter Bubble, Pariser warns that a potential downside to filtered searching is that it "closes us off to new ideas, subjects, and important information,"[25] and "creates the impression that our narrow self-interest is all that exists."[9] In his view, filter bubbles are potentially harmful to both individuals and society. He criticized Google and Facebook for offering users "too much candy and not enough carrots."[26] He warned that "invisible algorithmic editing of the web" may limit our exposure to new information and narrow our outlook.[26] According to Pariser, the detrimental effects of filter bubbles include harm to the general society in the sense that they have the possibility of "undermining civic discourse" and making people more vulnerable to "propaganda and manipulation."[9] He wrote:

A world constructed from the familiar is a world in which there's nothing to learn ... (since there is) invisible autopropaganda, indoctrinating us with our own ideas.

— Eli Pariser in The Economist, 2011[27]

Many people are unaware that filter bubbles even exist. This can be seen in an article in The Guardian, which mentioned the fact that "more than 60% of Facebook users are entirely unaware of any curation on Facebook at all, believing instead that every single story from their friends and followed pages appeared in their news feed."[28] A brief explanation for how Facebook decides what goes on a user's news feed is through an algorithm that takes into account "how you have interacted with similar posts in the past."[28]

Extensions of concept

A filter bubble has been described as exacerbating a phenomenon that called splinternet or cyberbalkanization,[Note 1] which happens when the internet becomes divided into sub-groups of like-minded people who become insulated within their own online community and fail to get exposure to different views. This concern dates back to the early days of the publicly accessible internet, with the term "cyberbalkanization" being coined in 1996.[29][30][31] Other terms have been used to describe this phenomenon, including "ideological frames"[9] and "the figurative sphere surrounding you as you search the internet."[19]

The concept of a filter bubble has been extended into other areas, to describe societies that self-segregate according political views but also economic, social, and cultural situations.[32] That bubbling results in a loss of the broader community and creates the sense that for example, children do not belong at social events unless those events were especially planned to be appealing for children and unappealing for adults without children.[32]

Barack Obama's farewell address identified a similar concept to filter bubbles as a "threat to [Americans'] democracy," i.e., the "retreat into our own bubbles, ...especially our social media feeds, surrounded by people who look like us and share the same political outlook and never challenge our assumptions... And increasingly, we become so secure in our bubbles that we start accepting only information, whether it's true or not, that fits our opinions, instead of basing our opinions on the evidence that is out there."[33]

Comparison with echo chambers

Both "echo chambers" and "filter bubbles" describe situations where individuals are exposed to a narrow range of opinions and perspectives that reinforce their existing beliefs and biases, but there are some subtle differences between the two, especially in practices surrounding social media.[34][35]

Specific to news media, an echo chamber is a metaphorical description of a situation in which beliefs are amplified or reinforced by communication and repetition inside a closed system.[36][37] Based on the sociological concept of selective exposure theory, the term is a metaphor based on the acoustic echo chamber, where sounds reverberate in a hollow enclosure. With regard to social media, this sort of situation feeds into explicit mechanisms of self-selected personalization, which describes all processes in which users of a given platform can actively opt in and out of information consumption, such as a user's ability to follow other users or select into groups.[38]

In an echo chamber, people are able to seek out information that reinforces their existing views, potentially as an unconscious exercise of confirmation bias. This sort of feedback regulation may increase political and social polarization and extremism. This can lead to users aggregating into homophilic clusters within social networks, which contributes to group polarization.[39] "Echo chambers" reinforce an individual's beliefs without factual support. Individuals are surrounded by those who acknowledge and follow the same viewpoints, but they also possess the agency to break outside of the echo chambers.[40]

On the other hand, filter bubbles are implicit mechanisms of pre-selected personalization, where a user's media consumption is created by personalized algorithms; the content a user sees is filtered through an AI-driven algorithm that reinforces their existing beliefs and preferences, potentially excluding contrary or diverse perspectives. In this case, users have a more passive role and are perceived as victims of a technology that automatically limits their exposure to information that would challenge their world view.[38] Some researchers argue, however, that because users still play an active role in selectively curating their own newsfeeds and information sources through their interactions with search engines and social media networks, that they directly assist in the filtering process by AI-driven algorithms, thus effectively engaging in self-segregating filter bubbles.[41]

Despite their differences, the usage of these terms go hand-in-hand in both academic and platform studies. It is often hard to distinguish between the two concepts in social network studies, due to limitations in accessibility of the filtering algorithms, that perhaps could enable researchers to compare and contrast the agencies of the two concepts.[42] This type of research will continue to grow more difficult to conduct, as many social media networks have also begun to limit API access needed for academic research.[43]

Remove ads

Reactions and studies

Summarize

Perspective

Media reactions

There are conflicting reports about the extent to which personalized filtering happens and whether such activity is beneficial or harmful. Analyst Jacob Weisberg, writing in June 2011 for Slate, did a small non-scientific experiment to test Pariser's theory which involved five associates with different ideological backgrounds conducting a series of searches, "John Boehner," "Barney Frank," "Ryan plan," and "Obamacare," and sending Weisberg screenshots of their results. The results varied only in minor respects from person to person, and any differences did not appear to be ideology-related, leading Weisberg to conclude that a filter bubble was not in effect, and to write that the idea that most internet users were "feeding at the trough of a Daily Me" was overblown.[9] Weisberg asked Google to comment, and a spokesperson stated that algorithms were in place to deliberately "limit personalization and promote variety."[9] Book reviewer Paul Boutin did a similar experiment to Weisberg's among people with differing search histories and again found that the different searchers received nearly identical search results.[6] Interviewing programmers at Google, off the record, journalist Per Grankvist found that user data used to play a bigger role in determining search results but that Google, through testing, found that the search query is by far the best determinant of what results to display.[44]

There are reports that Google and other sites maintain vast "dossiers" of information on their users, which might enable them to personalize individual internet experiences further if they choose to do so. For instance, the technology exists for Google to keep track of users' histories even if they don't have a personal Google account or are not logged into one.[6] One report stated that Google had collected "10 years' worth" of information amassed from varying sources, such as Gmail, Google Maps, and other services besides its search engine,[10][failed verification] although a contrary report was that trying to personalize the internet for each user, was technically challenging for an internet firm to achieve despite the huge amounts of available data.[citation needed] Analyst Doug Gross of CNN suggested that filtered searching seemed to be more helpful for consumers than for citizens, and would help a consumer looking for "pizza" find local delivery options based on a personalized search and appropriately filter out distant pizza stores.[10][failed verification] Organizations such as The Washington Post, The New York Times, and others have experimented with creating new personalized information services, with the aim of tailoring search results to those that users are likely to like or agree with.[9]

Academia studies and reactions

A scientific study from Wharton that analyzed personalized recommendations also found that these filters can create commonality, not fragmentation, in online music taste.[45] Consumers reportedly use the filters to expand their taste rather than to limit it.[45] Harvard law professor Jonathan Zittrain disputed the extent to which personalization filters distort Google search results, saying that "the effects of search personalization have been light."[9] Further, Google provides the ability for users to shut off personalization features if they choose[46] by deleting Google's record of their search history and setting Google not to remember their search keywords and visited links in the future.[6]

A study from Internet Policy Review addressed the lack of a clear and testable definition for filter bubbles across disciplines; this often results in researchers defining and studying filter bubbles in different ways.[47] Subsequently, the study explained a lack of empirical data for the existence of filter bubbles across disciplines[12] and suggested that the effects attributed to them may stem more from preexisting ideological biases than from algorithms. Similar views can be found in other academic projects, which also address concerns with the definitions of filter bubbles and the relationships between ideological and technological factors associated with them.[48] A critical review of filter bubbles suggested that "the filter bubble thesis often posits a special kind of political human who has opinions that are strong, but at the same time highly malleable" and that it is a "paradox that people have an active agency when they select content but are passive receivers once they are exposed to the algorithmically curated content recommended to them."[49]

A study by Oxford, Stanford, and Microsoft researchers examined the browsing histories of 1.2 million U.S. users of the Bing Toolbar add-on for Internet Explorer between March and May 2013. They selected 50,000 of those users who were active news consumers, then classified whether the news outlets they visited were left- or right-leaning, based on whether the majority of voters in the counties associated with user IP addresses voted for Obama or Romney in the 2012 presidential election. They then identified whether news stories were read after accessing the publisher's site directly, via the Google News aggregation service, web searches, or social media. The researchers found that while web searches and social media do contribute to ideological segregation, the vast majority of online news consumption consisted of users directly visiting left- or right-leaning mainstream news sites and consequently being exposed almost exclusively to views from a single side of the political spectrum. Limitations of the study included selection issues such as Internet Explorer users skewing higher in age than the general internet population; Bing Toolbar usage and the voluntary (or unknowing) sharing of browsing history selection for users who are less concerned about privacy; the assumption that all stories in left-leaning publications are left-leaning, and the same for right-leaning; and the possibility that users who are not active news consumers may get most of their news via social media, and thus experience stronger effects of social or algorithmic bias than those users who essentially self-select their bias through their choice of news publications (assuming they are aware of the publications' biases).[50]

A study by Princeton University and New York University researchers aimed to study the impact of filter bubble and algorithmic filtering on social media polarization. They used a mathematical model called the "stochastic block model" to test their hypothesis on the environments of Reddit and Twitter. The researchers gauged changes in polarization in regularized social media networks and non-regularized networks, specifically measuring the percent changes in polarization and disagreement on Reddit and Twitter. They found that polarization increased significantly at 400% in non-regularized networks, while polarization increased by 4% in regularized networks and disagreement by 5%.[51]

Platform studies

While algorithms do limit political diversity, some of the filter bubbles are the result of user choice.[52] A study by data scientists at Facebook found that users have one friend with contrasting views for every four Facebook friends that share an ideology.[53][54] No matter what Facebook's algorithm for its News Feed is, people are more likely to befriend/follow people who share similar beliefs.[53] The nature of the algorithm is that it ranks stories based on a user's history, resulting in a reduction of the "politically cross-cutting content by 5 percent for conservatives and 8 percent for liberals."[53] However, even when people are given the option to click on a link offering contrasting views, they still default to their most viewed sources.[53] "[U]ser choice decreases the likelihood of clicking on a cross-cutting link by 17 percent for conservatives and 6 percent for liberals."[53] A cross-cutting link is one that introduces a different point of view than the user's presumed point of view or what the website has pegged as the user's beliefs.[55] A recent study from Levi Boxell, Matthew Gentzkow, and Jesse M. Shapiro suggest that online media isn't the driving force for political polarization.[56] The paper argues that polarization has been driven by the demographic groups that spend the least time online. The greatest ideological divide is experienced amongst Americans older than 75, while only 20% reported using social media as of 2012. In contrast, 80% of Americans aged 18–39 reported using social media as of 2012. The data suggests that the younger demographic isn't any more polarized in 2012 than it had been when online media barely existed in 1996. The study highlights differences between age groups and how news consumption remains polarized as people seek information that appeals to their preconceptions. Older Americans usually remain stagnant in their political views as traditional media outlets continue to be a primary source of news, while online media is the leading source for the younger demographic. Although algorithms and filter bubbles weaken content diversity, this study reveals that political polarization trends are primarily driven by pre-existing views and failure to recognize outside sources. A 2020 study from Germany utilized the Big Five Psychology model to test the effects of individual personality, demographics, and ideologies on user news consumption.[57] Basing their study on the notion that the number of news sources that users consume impacts their likelihood to be caught in a filter bubble—with higher media diversity lessening the chances—their results suggest that certain demographics (higher age and male) along with certain personality traits (high openness) correlate positively with a number of news sources consumed by individuals. The study also found a negative ideological association between media diversity and the degree to which users align with right-wing authoritarianism. Beyond offering different individual user factors that may influence the role of user choice, this study also raises questions and associations between the likelihood of users being caught in filter bubbles and user voting behavior.[57]

The Facebook study found that it was "inconclusive" whether or not the algorithm played as big a role in filtering News Feeds as people assumed.[58] The study also found that "individual choice," or confirmation bias, likewise affected what gets filtered out of News Feeds.[58] Some social scientists criticized this conclusion because the point of protesting the filter bubble is that the algorithms and individual choice work together to filter out News Feeds.[59] They also criticized Facebook's small sample size, which is about "9% of actual Facebook users," and the fact that the study results are "not reproducible" due to the fact that the study was conducted by "Facebook scientists" who had access to data that Facebook does not make available to outside researchers.[60]

Though the study found that only about 15–20% of the average user's Facebook friends subscribe to the opposite side of the political spectrum, Julia Kaman from Vox theorized that this could have potentially positive implications for viewpoint diversity. These "friends" are often acquaintances with whom we would not likely share our politics without the internet. Facebook may foster a unique environment where a user sees and possibly interacts with content posted or re-posted by these "second-tier" friends. The study found that "24 percent of the news items liberals saw were conservative-leaning and 38 percent of the news conservatives saw was liberal-leaning."[61] "Liberals tend to be connected to fewer friends who share information from the other side, compared with their conservative counterparts."[62] This interplay has the ability to provide diverse information and sources that could moderate users' views.

Similarly, a study of Twitter's filter bubbles by New York University concluded that "Individuals now have access to a wider span of viewpoints about news events, and most of this information is not coming through the traditional channels, but either directly from political actors or through their friends and relatives. Furthermore, the interactive nature of social media creates opportunities for individuals to discuss political events with their peers, including those with whom they have weak social ties."[63] According to these studies, social media may be diversifying information and opinions users come into contact with, though there is much speculation around filter bubbles and their ability to create deeper political polarization.

One driver and possible solution to the problem is the role of emotions in online content. A 2018 study shows that different emotions of messages can lead to polarization or convergence: joy is prevalent in emotional polarization, while sadness and fear play significant roles in emotional convergence.[64] Since it is relatively easy to detect the emotional content of messages, these findings can help to design more socially responsible algorithms by starting to focus on the emotional content of algorithmic recommendations.

Social bots have been utilized by different researchers to test polarization and related effects that are attributed to filter bubbles and echo chambers.[65][66] A 2018 study used social bots on Twitter to test deliberate user exposure to partisan viewpoints.[65] The study claimed it demonstrated partisan differences between exposure to differing views, although it warned that the findings should be limited to party-registered American Twitter users. One of the main findings was that after exposure to differing views (provided by the bots), self-registered republicans became more conservative, whereas self-registered liberals showed less ideological change if none at all. A different study from The People's Republic of China utilized social bots on Weibo—the largest social media platform in China—to examine the structure of filter bubbles regarding to their effects on polarization.[66] The study draws a distinction between two conceptions of polarization. One being where people with similar views form groups, share similar opinions, and block themselves from differing viewpoints (opinion polarization), and the other being where people do not access diverse content and sources of information (information polarization). By utilizing social bots instead of human volunteers and focusing more on information polarization rather than opinion-based, the researchers concluded that there are two essential elements of a filter bubble: a large concentration of users around a single topic and a uni-directional, star-like structure that impacts key information flows.

In June 2018, the platform DuckDuckGo conducted a research study on the Google Web Browser Platform. For this study, 87 adults in various locations around the continental United States googled three keywords at the exact same time: immigration, gun control, and vaccinations. Even in private browsing mode, most people saw results unique to them. Google included certain links for some that it did not include for other participants, and the News and Videos infoboxes showed significant variation. Google publicly disputed these results saying that Search Engine Results Page (SERP) personalization is mostly a myth. Google Search Liaison, Danny Sullivan, stated that "Over the years, a myth has developed that Google Search personalizes so much that for the same query, different people might get significantly different results from each other. This isn't the case. Results can differ, but usually for non-personalized reasons."[67]

When filter bubbles are in place, they can create specific moments that scientists call 'Whoa' moments. A 'Whoa' moment is when an article, ad, post, etc., appears on your computer that is in relation to a current action or current use of an object. Scientists discovered this term after a young woman was performing her daily routine, which included drinking coffee when she opened her computer and noticed an advertisement for the same brand of coffee that she was drinking. "Sat down and opened up Facebook this morning while having my coffee, and there they were two ads for Nespresso. Kind of a 'whoa' moment when the product you're drinking pops up on the screen in front of you."[68] "Whoa" moments occur when people are "found." Which means advertisement algorithms target specific users based on their "click behavior" to increase their sale revenue.

Several designers have developed tools to counteract the effects of filter bubbles (see § Countermeasures).[69] Swiss radio station SRF voted the word filterblase (the German translation of filter bubble) word of the year 2016.[70]

Remove ads

Countermeasures

Summarize

Perspective

By individuals

In The Filter Bubble: What the Internet Is Hiding from You,[71] internet activist Eli Pariser highlights how the increasing occurrence of filter bubbles further emphasizes the value of one's bridging social capital as defined by Robert Putman. Pariser argues that filter bubbles reinforce a sense of social homogeneity, which weakens ties between people with potentially diverging interests and viewpoints.[72] In that sense, high bridging capital may promote social inclusion by increasing our exposure to a space that goes beyond self-interests. Fostering one's bridging capital, such as by connecting with more people in an informal setting, may be an effective way to reduce the filter bubble phenomenon.

Users can take many actions to burst through their filter bubbles, for example by making a conscious effort to evaluate what information they are exposing themselves to, and by thinking critically about whether they are engaging with a broad range of content.[73] Users can consciously avoid news sources that are unverifiable or weak. Chris Glushko, the VP of Marketing at IAB, advocates using fact-checking sites to identify fake news.[74] Technology can also play a valuable role in combating filter bubbles.[75]

Some browser plug-ins are aimed to help people step out of their filter bubbles and make them aware of their personal perspectives; thus, these media show content that contradicts with their beliefs and opinions. In addition to plug-ins, there are apps created with the mission of encouraging users to open their echo chambers. News apps such as Read Across the Aisle nudge users to read different perspectives if their reading pattern is biased towards one side/ideology.[76] Although apps and plug-ins are tools humans can use, Eli Pariser stated "certainly, there is some individual responsibility here to really seek out new sources and people who aren't like you."[52]

Since web-based advertising can further the effect of the filter bubbles by exposing users to more of the same content, users can block much advertising by deleting their search history, turning off targeted ads, and downloading browser extensions. Some use anonymous or non-personalized search engines such as YaCy, DuckDuckGo, Qwant, Startpage.com, Disconnect, and Searx in order to prevent companies from gathering their web-search data. Swiss daily Neue Zürcher Zeitung is beta-testing a personalized news engine app which uses machine learning to guess what content a user is interested in, while "always including an element of surprise"; the idea is to mix in stories which a user is unlikely to have followed in the past.[77]

The European Union is taking measures to lessen the effect of the filter bubble. The European Parliament is sponsoring inquiries into how filter bubbles affect people's ability to access diverse news.[78] Additionally, it introduced a program aimed to educate citizens about social media.[79] In the U.S., the CSCW panel suggests the use of news aggregator apps to broaden media consumers news intake. News aggregator apps scan all current news articles and direct you to different viewpoints regarding a certain topic. Users can also use a diversely-aware news balancer which visually shows the media consumer if they are leaning left or right when it comes to reading the news, indicating right-leaning with a bigger red bar or left-leaning with a bigger blue bar. A study evaluating this news balancer found "a small but noticeable change in reading behavior, toward more balanced exposure, among users seeing the feedback, as compared to a control group".[80]

By media companies

In light of recent concerns about information filtering on social media, Facebook acknowledged the presence of filter bubbles and has taken strides toward removing them.[81] In January 2017, Facebook removed personalization from its Trending Topics list in response to problems with some users not seeing highly talked-about events there.[82] Facebook's strategy is to reverse the Related Articles feature that it had implemented in 2013, which would post related news stories after the user read a shared article. Now, the revamped strategy would flip this process and post articles from different perspectives on the same topic. Facebook is also attempting to go through a vetting process whereby only articles from reputable sources will be shown. Along with the founder of Craigslist and a few others, Facebook has invested $14 million into efforts "to increase trust in journalism around the world, and to better inform the public conversation".[81] The idea is that even if people are only reading posts shared from their friends, at least these posts will be credible.

Similarly, Google, as of January 30, 2018, has also acknowledged the existence of a filter bubble difficulties within its platform. Because current Google searches pull algorithmically ranked results based upon "authoritativeness" and "relevancy" which show and hide certain search results, Google is seeking to combat this. By training its search engine to recognize the intent of a search inquiry rather than the literal syntax of the question, Google is attempting to limit the size of filter bubbles. As of now, the initial phase of this training will be introduced in the second quarter of 2018. Questions that involve bias and/or controversial opinions will not be addressed until a later time, prompting a larger problem that exists still: whether the search engine acts either as an arbiter of truth or as a knowledgeable guide by which to make decisions by.[83]

In April 2017 news surfaced that Facebook, Mozilla, and Craigslist contributed to the majority of a $14M donation to CUNY's "News Integrity Initiative," poised at eliminating fake news and creating more honest news media.[84]

Later, in August, Mozilla, makers of the Firefox web browser, announced the formation of the Mozilla Information Trust Initiative (MITI). The +MITI would serve as a collective effort to develop products, research, and community-based solutions to combat the effects of filter bubbles and the proliferation of fake news. Mozilla's Open Innovation team leads the initiative, striving to combat misinformation, with a specific focus on the product with regards to literacy, research and creative interventions.[85]

Remove ads

Ethical implications

Summarize

Perspective

As the popularity of cloud services increases, personalized algorithms used to construct filter bubbles are expected to become more widespread.[86] Scholars have begun considering the effect of filter bubbles on the users of social media from an ethical standpoint, particularly concerning the areas of personal freedom, security, and information bias.[87] Filter bubbles in popular social media and personalized search sites can determine the particular content seen by users, often without their direct consent or cognizance,[86] due to the algorithms used to curate that content. Self-created content manifested from behavior patterns can lead to partial information blindness.[88] Critics of the use of filter bubbles speculate that individuals may lose autonomy over their own social media experience and have their identities socially constructed as a result of the pervasiveness of filter bubbles.[86]

Technologists, social media engineers, and computer specialists have also examined the prevalence of filter bubbles.[89] Mark Zuckerberg, founder of Facebook, and Eli Pariser, author of The Filter Bubble, have expressed concerns regarding the risks of privacy and information polarization.[90][91] The information of the users of personalized search engines and social media platforms is not private, though some people believe it should be.[90] The concern over privacy has resulted in a debate as to whether or not it is moral for information technologists to take users' online activity and manipulate future exposure to related information.[91]

Some scholars have expressed concerns regarding the effects of filter bubbles on individual and social well-being, i.e. the dissemination of health information to the general public and the potential effects of internet search engines to alter health-related behavior.[16][17][18][92] A 2019 multi-disciplinary book reported research and perspectives on the roles filter bubbles play in regards to health misinformation.[18] Drawing from various fields such as journalism, law, medicine, and health psychology, the book addresses different controversial health beliefs (e.g. alternative medicine and pseudoscience) as well as potential remedies to the negative effects of filter bubbles and echo chambers on different topics in health discourse. A 2016 study on the potential effects of filter bubbles on search engine results related to suicide found that algorithms play an important role in whether or not helplines and similar search results are displayed to users and discussed the implications their research may have for health policies.[17] Another 2016 study from the Croatian Medical journal proposed some strategies for mitigating the potentially harmful effects of filter bubbles on health information, such as: informing the public more about filter bubbles and their associated effects, users choosing to try alternative [to Google] search engines, and more explanation of the processes search engines use to determine their displayed results.[16]

Since the content seen by individual social media users is influenced by algorithms that produce filter bubbles, users of social media platforms are more susceptible to confirmation bias,[93] and may be exposed to biased, misleading information.[94] Social sorting and other unintentional discriminatory practices are also anticipated as a result of personalized filtering.[95]

In light of the 2016 U.S. presidential election scholars have likewise expressed concerns about the effect of filter bubbles on democracy and democratic processes, as well as the rise of "ideological media".[11] These scholars fear that users will be unable to "[think] beyond [their] narrow self-interest" as filter bubbles create personalized social feeds, isolating them from diverse points of view and their surrounding communities.[96] For this reason, an increasingly discussed possibility is to design social media with more serendipity, that is, to proactively recommend content that lies outside one's filter bubble, including challenging political information and, eventually, to provide empowering filters and tools to users.[97][98][99] A related concern is in fact how filter bubbles contribute to the proliferation of "fake news" and how this may influence political leaning, including how users vote.[11][100][101]

Revelations in March 2018 of Cambridge Analytica's harvesting and use of user data for at least 87 million Facebook profiles during the 2016 presidential election highlight the ethical implications of filter bubbles.[102] Co-founder and whistleblower of Cambridge Analytica Christopher Wylie, detailed how the firm had the ability to develop "psychographic" profiles of those users and use the information to shape their voting behavior.[103] Access to user data by third parties such as Cambridge Analytica can exasperate and amplify existing filter bubbles users have created, artificially increasing existing biases and further divide societies.

Remove ads

Dangers

Filter bubbles have stemmed from a surge in media personalization, which can trap users. The use of AI to personalize offerings can lead to users viewing only content that reinforces their own viewpoints without challenging them. Social media websites like Facebook may also present content in a way that makes it difficult for users to determine the source of the content, leading them to decide for themselves whether the source is reliable or fake.[104] That can lead to people becoming used to hearing what they want to hear, which can cause them to react more radically when they see an opposing viewpoint. The filter bubble may cause the person to see any opposing viewpoints as incorrect and so could allow the media to force views onto consumers.[105][104][106]

Researches explain that the filter bubble reinforces what one is already thinking.[107] This is why it is extremely important to utilize resources that offer various points of view.[107]

Remove ads

See also

- Algorithmic curation

- Algorithmic radicalization

- Allegory of the Cave

- Attention inequality

- Communal reinforcement

- Content farm

- Dead Internet theory

- Deradicalization

- Echo chamber (media)

- False consensus effect

- Group polarization

- Groupthink

- Influencer speak

- Infodemic

- Information silo

- Media consumption

- Narrowcasting

- Search engine manipulation effect

- Selective exposure theory

- Serendipitous discovery, an antithesis of filter bubble

- The Social Dilemma

- Stereotype

Remove ads

Notes

- The term cyber-balkanization (sometimes with a hyphen) is a hybrid of cyber, relating to the internet, and Balkanization, referring to that region of Europe that was historically subdivided by languages, religions and cultures; the term was coined in a paper by MIT researchers Van Alstyne and Brynjolfsson.

References

Further reading

External links

Wikiwand - on

Seamless Wikipedia browsing. On steroids.

Remove ads